Join the Conversation

- Find Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- Result not including complete data when including ...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Result not including complete data when including multiple hosts

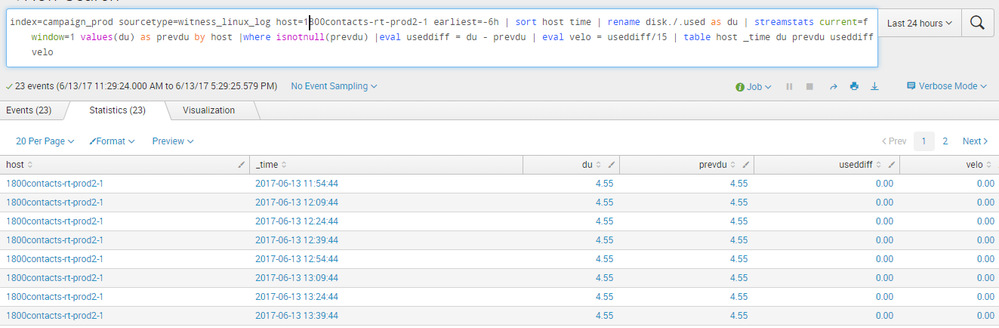

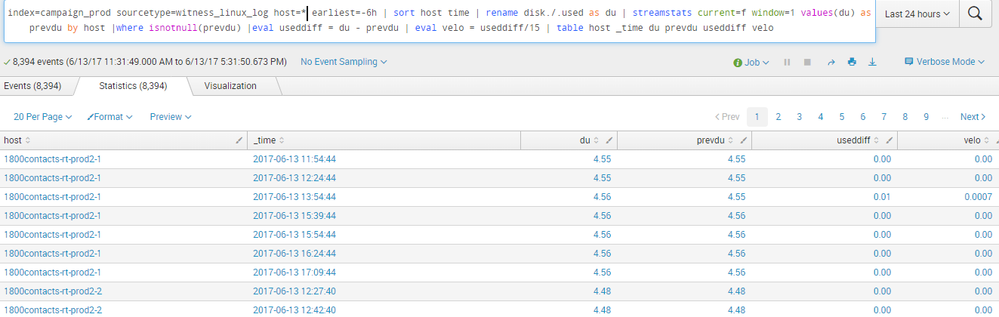

This question is slightly theoretical so kindly bear with me. I am trying to make a timechart for multiple hosts on a single graph. The event sampling is every 15 mins and I have to consider the data for the past 6 hrs.(i.e. 24 samples-1 removed since difference between two samples have to be taken). The query works fine for a single host.

However when doing it for multiple hosts it is not giving all the relevant samples

As you can see it is giving just 3-4 samples for each(not consistent.the number of samples for each host is changing every time). What could be a possible reason for this and any way to workaround this to create the timechart of all of them together.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Okay, there are a couple of different potential sources of issues. The first, as pointed out by @jplumsdaine22, is the misspelling of _time.

The second potential issue is if any records have null du. (By the way, for form's sake, if nothing else, I'd include quotes around "disk./.used")

Just as an efficiency thing, I'd also drop all unneeded fields before the sort.

If you expect to receive a record every 15 minutes, then I would probably bin the time into 15m increments in order to align all the hosts. That allows you to drop in records to calculate and pick up any missing chunks (see the appendpipe).

index=foo ...

| table _time host "disk./.used"

| rename "disk./.used" as du

| bin _time span=15m

| appendpipe [| stats values(host) as host values(_time) as _time | eval du = -1.00]

| stats max(du) as du by host _time

| eval du=if(du<0,null(),du)

| streamstats current=f last(du) as prevdu by host

| eval du=coalesce(du,prevdu)

| where isnotnull(prevdu)

| eval useddu = du - prevdu

| eval velo = useddu/15

| table host _time du prevdu useddiff velo

You'll notice we're using last instead of values - that allows us not to set a window. We're setting a negative default and using max so that, if any record was received for a _time bucket, then that value will be used, otherwise we will null it out and eventually use whatever the last value we had was.

Now, this version won't have the holes coming out of it that your prior version did, and if the du was null, it will get filled in, but if there is something in your system that is spitting bad data, we've wallpapered over it and hidden it from view. It might be best to work incrementally on your code in order to identify exactly what caused the problem, before implementing something like this.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

FYI - Your code and pictures are not visible to those of us behind corporate firewalls.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Might not be the solution but I noticed you're sorting by time rather than _time, so sort is not working as you might expect.