Are you a member of the Splunk Community?

- Find Answers

- :

- Splunk Platform

- :

- Splunk Enterprise

- :

- index clustering does not distribute primary bucke...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

index clustering does not distribute primary buckets well for indexes

Hi forum,

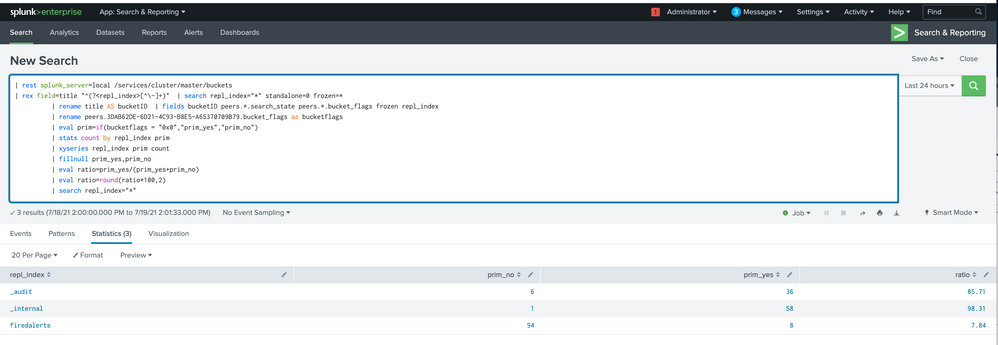

I have a 2 peer single site (sf2, rf2) index cluster. We recognized that the primaries for indexes are not distributed even by using the search:

| rest splunk_server=local /services/cluster/master/buckets

| rex field=title "^(?<repl_index>[^\~]+)" | search repl_index="*" standalone=0 frozen=*

| rename title AS bucketID | fields bucketID peers.*.search_state peers.*.bucket_flags frozen repl_index

| rename peers.3DAB62DE-6D21-4C93-B8E5-A65370709B79.bucket_flags as bucketflags

| eval prim=if(bucketflags = "0x0","prim_yes","prim_no")

| stats count by repl_index prim

| xyseries repl_index prim count

| fillnull prim_yes,prim_no

| eval ratio=prim_yes/(prim_yes+prim_no)

| eval ratio=round(ratio*100,2)

| search repl_index="*"

More or less all primaries are either on one indexer or the other, resulting in uneven load as we have a search hotspot on one index.

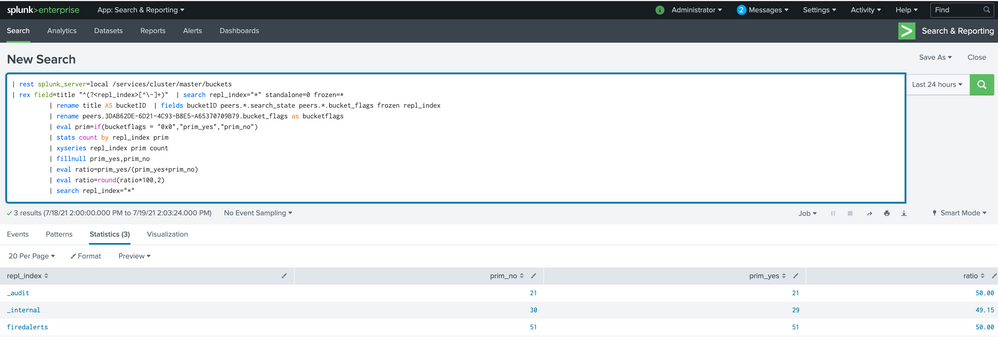

We were able to have a far better distribution after we set sf=1, removed excess buckets and set sf=2 again.

Unfortunatly after stop an indexer for a while or do a rolling restart it's again very uneven distributed (as seen on the first screenshot).

it's also possible to get an even distribution when stopping clustermaster and peers at the same time and starting again - in this time we have data loss. restarting any component for it's own doesn't fix the issue.

we tried to rebalance primaries using:

curl -k -u admin:plaseentercreditcardnumber --request POST https://localhost:8089/services/cluster/master/control/control/rebalance_primaries

any hints how to fix this? We are using v8.0.7.

best regards,

Andreas

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

after further testing it looks like upgrading to v.8.2.1 fixes the issue. But I havn't found anything usefull in release notes. 😞

Regards,

Andreas

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi all,

v8.1.5 also seems to have no issues..