Are you a member of the Splunk Community?

- Find Answers

- :

- Using Splunk

- :

- Other Using Splunk

- :

- Reporting

- :

- Splunk 8.0.2 report acceleration broken for report...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Splunk 8.0.2 report acceleration broken for reports using inputlookup command in subsearches

Prior to updating to Splunk Enterprise 8.0.2 scheduled accelerated reports ran extremely fast:

Report A

Duration: 37.166

Record count: 314

After updating to Splunk Enterprise 8.0.2 the report ran extremely slow:

Report A

Duration: 418.621

Record count: 300

Given the patch notes for 8.0.2 – I'm not seeing any changes to acceleration or summary indexing, so is it safe to assume this is a fluke?

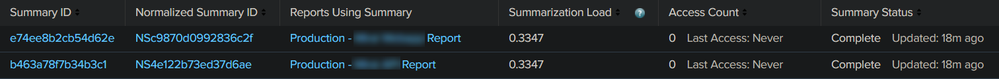

The massive increase in report generation (job) time of the scheduled accelerated reports appears to be caused by them no longer accessing the corresponding report acceleration summary. The "Access Count" never goes up when the scheduled reports are run.

Guess we'll wait for 8.0.3 to fix this.

Troubleshooting steps attempted:

Manually rebuild Report Acceleration Summaries

Delete all affected Report Acceleration Summaries

Delete and recreate affected production reports – recreated schedule and checked box for acceleration

Check filesystem permissions of inputlookup csv - confirmed -rw-rw-r-- splunk splunk

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Issue not fixed in Splunk Enterprise 8.1.0

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

When is this getting fixed?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Confirmed as a bug, workaround is:

$ cat limits.conf

[search]

phased_execution_mode = singlethreaded

The fix will be available in subsequent release. Reach out to Splunk support to know about the fix availability.

FYI, SPL-184327 - 8.0.2 Accelerated Report with "append" doesn't use the summary; worked in 8.0.1 before

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I updated our Splunk instance to 8.0.4.1 from 8.01 today and this issue is not resolved. The patch notes (https://docs.splunk.com/Documentation/Splunk/8.0.4/ReleaseNotes/Fixedissues#Splunk_Enterprise_8.0.4....) indicate SPL-184327 was supposed to be fixed in 8.0.4.

As noted in the original issue, report acceleration is not working correctly for reports using inputlookup command (example: csv input) in subsearches. Due to this, report generation (of the affected reports) is exponentially longer.

Any chance this issue can be reviewed for a future Splunk update?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The previously described workaround of adding phased_execution_mode = singlethreaded to the [search] stanza in limits.conf helps alleviate the issue, but leads to other performance issues – such as jobs stuck at "Finalizing..." in the GUI.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I tested:

index=_internal earliest=-1m

| stats count by _time

| append [inputlookup test.csv ]

In 8.0.4.1 as that failed in the previously mentioned version, but it worked just fine, it correctly found the report acceleration and mentioned that summary id's were found when I ran the search.

I'm assuming your example is more complicated, I'm on default settings and I cannot replicate this one anymore...

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Outside of the previously described workaround, my *.conf files are in the default state.

The csv in my example is just a list of specific identifiers (simple integers) that are referenced in the inputlookup function in the search below:

index="myindex" [| inputlookup input.csv | fields id | rename id as ID | format]

| fields NAME ID

| stats count(RECORD) as "Record" by NAME _time | dedup NAME sortby _time | rename NAME as "Username" | where _time>=relative_time(now(), "-2d@h") AND _time<=relative_time(now(), "@h") | eval mytime=strftime(_time,"%Y-%m-%dT%H:%M:%SZ") | table "Record" mytime | outputcsv export.csv

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I replicated it and confirmed that the limits.conf change does fix the issue (again)

FYI I did:

| makeresults count=10 | streamstats count | eval id=count | outputlookup input.csv

and my report is:

index="_internal" [| inputlookup input.csv | fields id | rename id as linecount | format]

| fields component linecount

| stats count as "Record" by component _time | dedup component sortby _time | rename component as "Username" | where _time>=relative_time(now(), "-2d@h") AND _time<=relative_time(now(), "@h") | eval mytime=strftime(_time,"%Y-%m-%dT%H:%M:%SZ") | table "Record" mytime | outputcsv export.csv

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm glad to hear you were able to reproduce the issue. The previously mentioned workaround is acceptable as a short-term solution – how it's causing other search-related performance issues, so a long-term fix would be greatly appreciated.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@gjanders Has this been assigned an SPL issue number yet?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

When can we expect one to be assigned? The workaround is a not a solution due to the report generation performance impact.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Currently it is waiting on the development team, no updates from support yet but I have checked in just in case

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Using the workaround of

# add to limits.conf

[search]

phasedexecutionmode = singlethreaded

Leads to searches stuck at "Finalizing job... " in the GUI.

After consulting old posts, I tried another workaround of

# add to limits.conf

[search]

remote_timeline_fetchall = 0

However this had no impact. Are there any other workarounds I can try?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Fantastic news, thanks!

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

No problem, perhaps accept so this is closed off?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sure, done.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

What's the search?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Outside of the inputlookup command the search itself is irrelevant. Once I remove the reference to inputlookup command from the search and hardcode a few examples from the csv – the search accelerates as expected.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

After further testing, it appears that reports that use inputlookup commands no longer accelerate correctly after the Splunk Enterprise 8.0.2 update. I reached this conclusion by removing the inputlookup command from the two reports mentioned above in my question – then hardcoded a few example values into my Splunk search query to test.

After doing so, the report acceleration performed as expected. Sadly this isn't a solution and only a workaround until the issue is (hopefully) fixed in future Splunk Enterprise releases.

CONFIRMED

Splunk 8.0.2 caused report acceleration to break. I was able to correct the issue by rolling back to version 8.0.1 via the steps below:

wget -O splunk-8.0.1-6db836e2fb9e-linux-2.6-x86_64.rpm 'https://www.splunk.com/bin/splunk/DownloadActivityServlet?architecture=x86_64&platform=linux&version=8.0.1&product=splunk&filename=splunk-8.0.1-6db836e2fb9e-linux-2.6-x86_64.rpm&wget=true'

sudo rpm -Uvh --oldpackage splunk-8.0.1-6db836e2fb9e-linux-2.6-x86_64.rpm

sudo service splunk start