Join the Conversation

- Find Answers

- :

- Splunk Administration

- :

- Getting Data In

- :

- Process of Indexed Extraction Configuration

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Process of Indexed Extraction Configuration

Hi All!

I'm currently running into a very weird situation with a Splunk instance I inherited. I setup the props.conf through the UI on my dev instance by indexing a small number of events and then using the UI to parse through the data, creating the props.conf. I should mention that my dev instance is a single host.

I then transferred the props.conf to our test environment which consists of 1 forwarder, 2 indexers (in a "fake" cluster since less than 3 indexers), 1 master, and 3 search heads in a search head cluster. Just like my dev instance, the test instance worked properly as the fields were showing up successfully when searching on a search head.

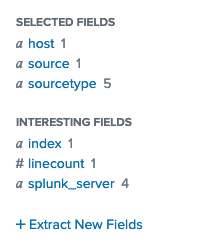

Finally, I transferred this same props.conf to the prod instance which consists of 3 forwarders, 4 indexers in an index cluster, 1 master and 5 search heads in a search head cluster. In this environment, none of the fields get properly extracted like they were in the test/dev instances but the events are still being parsed correctly as JSON. The current fields back I'm getting are these:

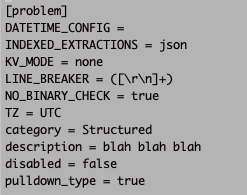

I've exhausted everything I know about how the configuration/field extraction is determined and I still can't figure it out. I'm sure there's something I'm missing, and given that it's an instance that I've inherited I figured I'd post something here to see what this wonderful community could come up with. Here is a snippet from my props.conf which is pretty much how most of the sourcetypes are configured:

This props.conf lives only on the indexers (as far as I know) and I didn't find any other props.conf files on the search heads (in $SPLUNK_HOME/etc/system/local).

Any help is greatly appreciated.

Thanks!

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You have not deployed the props.conf configurations to the correct place. Unlike every other indexing-related configuration which should be deployed on the first full-instance of Splunk that receives it (either the HF or Indexer tier), the INDEXED_EXTRACTIONS configuration must be deployed to the forwarder, the server which possesses the files and and has the inputs.conf that is set to pull in the json. So send it to your forwarder tier, and restart all splunk instances there and it will work when you forward new data in.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Also I've tried this and it did not fix my issue.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Did you fix this issue? Does HEC support explicit indexed_extractions of CSV or JSON files when it is set to raw mode?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I should've mentioned that we are using the HEC on the forwarders to transfer the data to the indexers. Does that change anything about what you suggested? I'm also still confused about how the configuration works in the test environment without the props.conf on the forwarder.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If you are using HEC, then you are not using INDEXED_EXTRACTIONS. Which is it?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Correct me if I'm wrong but I'm not sure why both have to be separate? I was under the impression it went like this:

host -- (through HEC) --> Forwarders ----> Indexers

How would this effect the extractions at all?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

That is an insane configuration. Typically HEC runs directly on the Indexers. If this is really your architecture, you need a reboot.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I agree the architecture needs to be rethought but according to this article, the HEC can run on either the forwarders or the indexers so I'm not really sure what you're getting at - https://docs.splunk.com/Documentation/Splunk/7.2.4/Data/ScaleHTTPEventCollector

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I see what you're saying. Yeah after inheriting this instance my goal was to rethink/architect everything but I was swamped with other things and have limited knowledge so I'm all for learning the best practice. You would suggest having the HEC on the indexer cluster, keeping the props.conf there and going from there?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, definitely. This is 100% upside (no downside).

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Awesome, thanks for your help woodcook it's greatly appreciated

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You have created an completely unnecessary bottleneck with your Intermediate Forwarder tier. What is worse, apparently you are writing HEC to disk there, so that you can do INDEXED_EXTRACTIONS which is nuts, because it defeats the primary benefit of HEC: diskless I/O.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

we need to put indexed_extractions = json on Indexer props and kv_mode = none on Search head props.

OR

try to put kv_mode=json on search head props and remove all other json related details from props

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As for the second solution, we want indexed extractions and not search head extractions which I believe that would cause.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Why would it work correctly on my test environment (no props.conf on the search heads) then?