Join the Conversation

- Find Answers

- :

- Splunk Administration

- :

- Getting Data In

- :

- How often/quickly does a Splunk universal forwarde...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

How often/quickly does a Splunk universal forwarder read a file?

Hi,

I have some customers who are VERY concerned about the Splunk universal forwarder on their servers. We run tests, and it performed fine, but they are still concerned and would like to know exactly how often/frequent Splunk "wakes up" (their term) to read files. I know that splunkd is always running, but is there some timeframe on how often it checks for new data?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

My understanding is that interval is only for modular and scripted inputs. Monitor inputs are basically "as fast as we can"

There two main elements to monitor inputs, the File Update Notifier(FUN) and the "Reader"

- FUN will notify the TailReader when it sees a change in a file at OS level. FUN will then stop monitoring the file till the readers (Tail or Batch) have finished reading the file.

- The TailReader looks at the file size, calculates the bytes to be read and passes it to the Batch Reader if need be (>20MB).

- The readers start reading the file in 64KB chunks per iteration, HOWERVER the readers will keep reading ( keeps iterating ) till it finds the end of the file.

- If the file has more data (more then the first time we saw a change in the file) we will read that new appended data.

- Which means if the file is growing really fast we read more data then we actually planned to. This is the reason why we sometimes see >100% reads in the input status page for files that are growing very fast.

- The other thing to note is the readers block on the Parsing queue to insert Pipeline Data. That is if the queue is full, the readers will wait till the queue frees up, before reading more data.

- Once the readers are done reading till the EOF, they notify FUN to start monitoring for changes again.

So the "interval" is really the iteration time, which is dependant on:

- How "active" the files are, how much data is written to the files and the speed of cpu and disk.

- How fast the we process the Pipeline Data further down the pipeline.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

"VERY concerned" are they worried about performance or data loss or something else? Any additional context might allow us to get more details that's effective to address their concerns.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

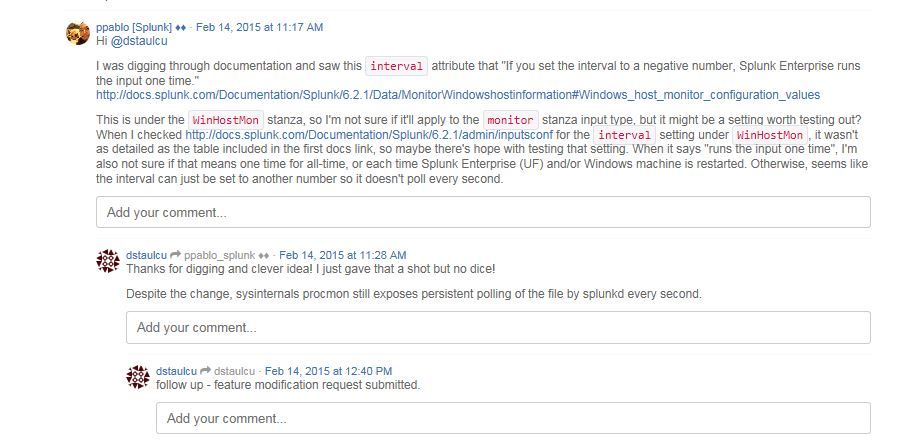

A nice discussion about it at Any known method to alter polling interval for file monitoring?

ppablo_splunk points to Monitor Windows host information

which refers to the interval parameter, which is defined -

-- How often, in seconds, to poll for new data. If you set the interval to a negative number, Splunk Enterprise runs the input one time. If you do not define this attribute, the input will not run, as there is no default.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I thought the interval only applied to scripted inputs and modular inputs but not monitor stanzas. I'm not seeing interval under the monitor stanzas in the docs: http://docs.splunk.com/Documentation/Splunk/latest/admin/Inputsconf#MONITOR:

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

SloshBurch is correct. File monitor is different.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The Splunk forwarders (any type UF or full version) actively monitor the file being configured. There is no interval to control it. There could be delay in "reading" of the file if there are too many files and/or amount of data is more than the thruput configured for it. Other than that it's real-time monitoring.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks. So is it event driven?

Is the write/update to the file triggering the splunkd process to read the file?

Or does “real-time” really mean some sub second interval?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Let's just say that Splunk builds a list of files and directories it's monitoring and go through the list continuously to see if something new has been found. Depending upon the number of files being monitoring, you can say the reading/checking for a file being updated could be in range from fraction of seconds to few seconds.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

somesoni2 is correct.

Basically splunk is constantly checking path's stat() for matching paths. This system call would be very quick if it is not required to access to physical disk where accessing disk requires spinning disk. So, basically instruction clock speed related to CPU clock speed and how fast stat() call returns is a big factor. So, if a user is using recommended hardware spec, and general situation, several thousands paths won't take a second to go through.

If this is not enough, we probably need more information for your use case.

Your user is VERY concerned about "reading file"? What is acceptable speed for indexing? "Reading" is not only thing before the data is ready to be searchable. If it is forwarder, "reading data" might not be main part of speed a user need to be concerned. We may need more deta of use case here why a user is concerned about "reading data". What is their goal to achieve. How many files needs to be monitored? How much data you're monitoring; e.g. 1GB per second per file for 1k files?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks. It's less about reading the files (which these people don't actually use), but about how splunk behaves so it doesn't affect other apps on this server. I'm reading ntp infrastructure files, but these are trading servers, and the app owners are very concerned about any possible tiny affect and want to know how the forwarder works. I know splunkd is always running. So, how often does it check for new data in the monitored files? Milliseconds?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It's continuously checking for new data in monitored files, there is no "often"/"frequency" involved. If other app owneres are impact of having UF, the footprint of UF is very small and it's consumption of resources depends upon the number of files/directories being monitored. So, as long as you're monitoring small number of files and folder, there is virtually no impact on currently running applications.

Also, see following link for options to limit the resource usage for UF, so the impact is always controlled.

https://answers.splunk.com/answers/47003/limit-the-memory-used-by-universal-forwarder.html

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

a212830. I still do not get your "concern" what is acceptable speed? This thread has proper response for general how Splunk monitor files. Sounds like you cannot accept these information. We really need "what exactly concern is"? "what is tiny affect"? Very vague? time? Can you give example or "affect" and "concern" in numbers? With clearer real examples, we can tell clearer responses. Otherwise, these responses above sound very reasonable to me.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Bingo. Thanks @masa

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I don't specifically know the answer to your question, but you can get empirical numbers for them using _indextime:

index=your_PITA_index | eval delay=_indextime-_time | stats avg(delay) max(delay) p99(delay) by source

In my experience, unless the Forwarder or the Indexer is CRAZY busy, logs are injected into Splunk in sub-second times.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks. Unfortunately, they want a specific answer to a specific question - how often does splunk read the file, or check to see if new data is coming in?