Are you a member of the Splunk Community?

- Find Answers

- :

- Splunk Administration

- :

- Deployment Architecture

- :

- In an indexer cluster, I cannot meet search factor...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

In an indexer cluster, I cannot meet search factor or replication factor due to a bucket being "not serviceable". How to resolve?

Hello.

I have a 2 node indexer cluster and all data is searchable. However I cannot get the search factor or replication factor to resolve due to, what appears to be, a single bucket.

When I look at Fixup Tasks - Pending I have a single task in search, replication and generation all complaining their Current State as "cannot fix up as bucket is not serviceable"

Trigger condition for search and rep is: add peer=(bucket-x) commit lone vote

Trigger condition for Generation is: non-streaming failure - src=(bucket-x) tgt=(bucket-y) failing=tgt

I have run Splunk rebuild against the path to the bucket and it seemed to finish without kicking errors. However, obviously, this didn't resolve the issue.

Thanks for any ideas.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

possibly port 8089 (default splunkd mgmt port) is not open between indexers and it needs to be.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

"not serviceable" is potentially an initial state of fixup. In general, CM is waiting for CP to get back to register a bucket for a fixup task. But, it should not stay as "not serviceable". So, it could be a bug if you really got stuck with this state.

Which version are you using? If the fix-up got stuck with the state of "not serviceable" and all indexers do not have any errors or warnings for the fixup bucket in splunkd.log, it must be some bug. Please file a Support case.

How did you rebuild the bucket? Did you stop the CP and rebuild a bucket and started the CP back and still have the issue? If you want to try all CM and CPs clean bucket status, you can try, stop CPs and restart CM and Start CPs, and see if it removes these buckets from the fixup list. If the same buckets come back, and get stuck in the fixup list. Please file a Support case.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- We're running 6.5.1. I'll go deeper in the logs on Monday to verify errors or warnings.

- I did as you mentioned. Stopped splunk, ran the rebuild, and restarted splunk. Same issue remains. I also tried the full stop and complete restart of the cluster (it's a dev system) same issue remains.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

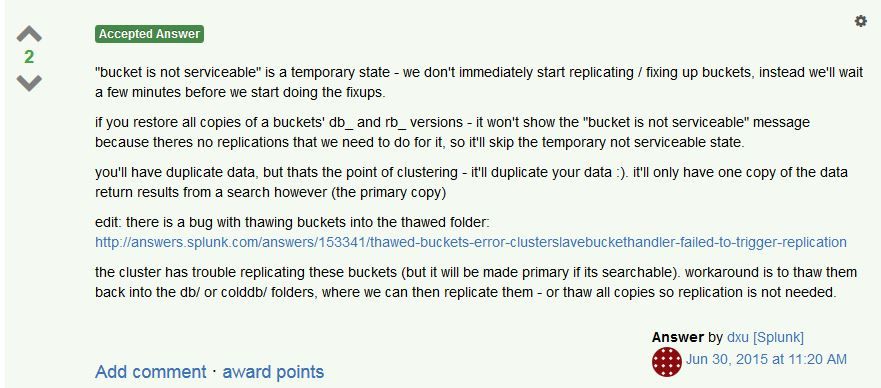

Interesting thing at Thawing data in an indexer clustering environment, do I need the rb_ buckets too?

It says -

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for the response.

I saw that post and didn't feel it was applicable as the "bucket is not serviceable" msg had been around for at least a couple days since I noticed it.

In addition we haven't done any data thawing or messing with the data at all.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I see - makes sense...