Join the Conversation

- Find Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- left join not working

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

left join not working

hi Splunk community,

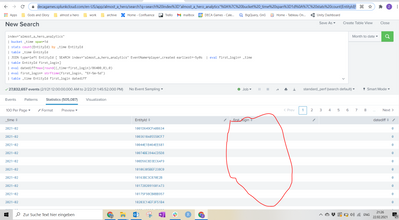

Somehow my left join is not working if I select all EntityIDs.

Althought when I select a single IdentityId, it is working...

Any hints on why the first one is not working and how I can fix it?

Thanks,

Zarrukh

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Since you have 500k events, JOIN command doesn't work as it is limited to 50k events. Try the following:

1. Write the data from the first search before JOIN command in your query to a lookup file (data_lookup_1.csv),

2. Write the data from the sub search to another lookup file (data_lookup_2.csv),

Your final query:

| inputlookup data_lookup_1.csv

| lookup data_lookup_2.csv EntityId

Try it and let me know if it works.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks, that is what I was thinking about. 2 questions

1. How I can create and write the results into that csv file?

2. How I can setup automatic update of that csv file?

Thanks

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

1. How I can create and write the results into that csv file?

- Use a saved search and run it for a day's data (Example: yesterday) and then write it to a lookup (preferably kvstore lookup).

- Since you have two searches write the output to 2 lookups.

2. How I can setup automatic update of that csv file?

- Schedule your first saved search to run it and update to lookup daily at 6am,

- Schedule your second search to run and update lookup at 6:30am

- Your final search query (shown below) which is matching the events from the two lookups should run at 7:00am and write it to a third lookup which will be your final lookup with matching events.

|inputlookup firstlookup

|lookup secondlookup fieldname

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Have you considered using stats instead of join to avoid limits (you have half a million entity ids!)

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

how I can use that?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

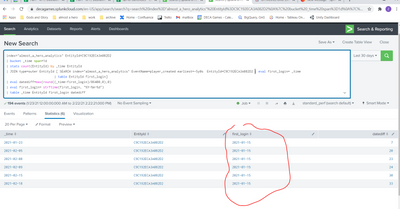

I would probably start like this

index="almost_a_hero_analytics"

| bin span=1d _time

| eval first_login=if(EventName="player_created", _time, null)

| eventstats values(first_login) as first_login by EntityId

| stats values(first_login) as first_login by _time EntityId

| eval datediff=max(round((_time-first_login)/86400,0),0)

| fieldformat first_login=strftime(first_login, "%Y-%m-%d")

| table _time EntityId first_login datediff- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

no, it will not work as it will take only people who created accounts for the selected time, but I need the list of accounts created for the whole time

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Good point - you could create a summary index which collects player creation dates, then use a map command to search this index for the player creation date - you would need to set the maxsearches so that it covers the number of players in your time period. You don't have to use a summary index, it is just that it might be quicker. If you are doing this in a dashboard, you might be able to have an initial search (either of your whole data set or the summary index) which gets all the players creation dates, and load the results from this in the map search.