- Splunk Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- Search Scheduler Searches Skipped

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Search Scheduler Searches Skipped

Hello,

I am seeing the below warning on our SH after splunk cloud performed a restart at the backend when i uninstalled an app from our Splunk SH.

Error:

Root Cause(s):

The percentage of non high priority searches skipped (100%) over the last 24 hours is very high and exceeded the red thresholds (20%) on this Splunk instance. Total Searches that were part of this percentage=12. Total skipped Searches=12

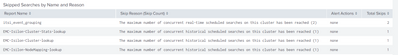

I identified the searches that were skipped using the cloud monitoring console, Is there any troubleshooting steps to fix this message since we are still seeing the SH health condition as RED.

Thanks

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi

you can see the reason for Skipped on that "Search -> Skipped Scheduled Searches" dashboard. Just update those searches and fix that reason or change schedule if there are too many searches running at same time.

r. Ismo

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @isoutamo

We are seeing the above mentioned reason for the skipped searches, actually we are on splunk cloud, do we need to raise any request for splunk support to increase any limits in order to resolve this.

Thanks

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

So your SHs are running real-time searches which are really resource intensive, as a quick fix try to convert them into scheduled searches, in "most" cases a real time search is never required.

Regarding the Skipped concurrent searches you can follow the below steps:

1. Detect which searches are being skipped

index=_internal earliest=-24h status=skipped sourcetype=scheduler2. Which apps the Skipped Searches are coming from

index=_internal earliest=-24h status=skipped sourcetype=scheduler

| stats count by host app | sort - count3. Identify the bad searches (change the timeframe and host as per your needs)

index=_internal sourcetype=scheduler status!=queued earliest=-30d@d host=<server_name>

| eval is_realtime=if(searchmatch("sid=rt* OR concurrency_category=real-time_scheduled"),"yes","no")

| fields run_time is_realtime savedsearch_name user

| stats avg(run_time) as average_run_time max(run_time) as max_run_time min(run_time)

as min_run_time max(is_realtime) as is_realtime by savedsearch_name user

| eval average_run_time = average_run_time/60| eval min_run_time = min_run_time/60

| eval max_run_time = max_run_time/60 | sort - average_run_time

| join savedsearch_name [|rest /servicesNS/-/-/saved/searches splunk_server=<server_name>

| search is_scheduled=1 | rename title AS savedsearch_name | rename eai:acl.app

as title| table splunk_server title savedsearch_name cron_schedule search ]

| rename title as "App Name" | fields splunk_server savedsearch_name user

average_run_time max_run_time min_run_time cron_schedule is_realtime search "App Name"

| sort splunk_server, -average_run_time4. Modify scheduler limits

In case the above did not remediate your situation the last option is to increase limits on a system by modifying limits.conf (make sure you do it on a custom app and not the one in $Splunk_HOME$/etc/default)

Out of the box with a Splunk 16 core system, Splunk can run 22 searches at any one time. That is calculated using the following formula:

max_hist_searches = max_searches_per_cpu ( default of 1) x number_of_cpus (16) + base_max_searches (default of 6).

Of those 22 searches, the scheduler is allocated 50 percent of that number by default (so 11 searches) according to the setting max_searches_perc.

Of those 11 searches that the scheduler can run, the auto summarization (things like Data Models) are allowed 50% of the number of scheduled searches. Taking 50% of the number of searches that the scheduler can run, we end up with about 6 searches that can be run at a time for your Data Models according to the setting for auto_summary_perc.

This all being said, if your data models are taking an extremely long time to run, or not completing and consistently skipping, increasing your base max searches from 6 to 12, will only get you to 7 auto summary searches at one time. You may want to alter auto_summary_perc in order to allow more searches to be dedicated to your Data Models. If you have the CPU resources, you may want to up the max_searches_per_cpu to something like 2. This would allow you to run 10 Data Model summarizations at any one time and give an overall number of 38 maximum historical searches.

The above info is derived from here

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@soumyasaha25 Since we are using Splunk Cloud and doesn't have access to back end of SH, I will check with Splunk support to see if they can increase the limit of max_searches_per_cpu to 2 and also I am not sure about the specs of our SH tier as it will be controlled entirely by Splunk.

Thanks for the info, Will keep you posted if this solution works

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As @soumyasaha25 said you should look through your RT searches and think if those can converted to scheduled searches. Personally I haven’t have ever need for alert run as RT. Only situation when those can used/ gives some advantages to you is looking event when you are doing data boarding.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have identified few apps where i noticed this scheduled searches but most of these apps has been pre enabled when we signed up for splunk cloud, We are not even using these, this question might be out of context but just wanted to know if we can disable these ITSI app?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I think (i might be wrong though) that you have a multi-tenant splunk environment, there are chances that some other team is using those ITSI searches. My suggestion would be to reach out to Splunk support, they will surely assist you.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content