- Splunk Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- How to to sort the date so that my graph is cohere...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

thank you in advance for your feedback.

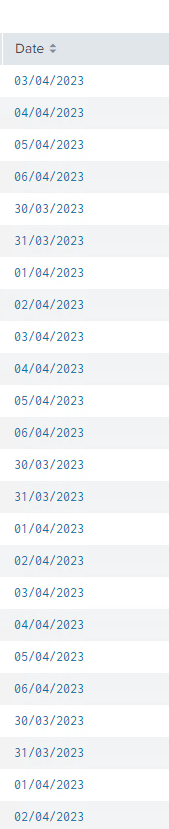

I would like to sort the date so that my graph is coherent, can you please help me?

| tstats summariesonly=t allow_old_summaries=t count from datamodel=Authentication.Authentication where [| inputlookup ****.csv | eval host=Machine | table host ] AND NOT Authentication.src_user="*$" AND NOT Authentication.src_user="unknown" by host,Authentication.src,Authentication.src_user,_time

| eval host=upper(host)

| eval Serveur='Authentication.src'

| eval **** = upper(trim(('Authentication.src_user')))

| eval samaccountname=substr(trim(upper('Authentication.src_user')),1,7)

| eval domaine="****"

| lookup **** samaccountname as samaccountname domaine as domaine

| search email="*"

| eval **** = samaccountname

| table ****, ****, host, email,ua,cn,_time,cn

| join host type=left [| inputlookup *****.csv | eval host=Machine]

| where Ferme="****" OR Ferme="****" OR Ferme="*****" OR Ferme="*****" OR Ferme="****"

| stats values(Ferme) as Ferme values(_time) as _time by *****,cn

| eval Date=strptime(_time,"%d/%m/%Y")

| sort Date

| eval Date=strftime(_time,"%d/%m/%Y")

| stats count as "nb. de connexion par jour" by Ferme,Date

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @numeroinconnu12 ,

to sort a date you have to transform them in epochtime, so, to sort your search:

| tstats summariesonly=t allow_old_summaries=t count from datamodel=Authentication.Authentication where [| inputlookup ****.csv | eval host=Machine | table host ] AND NOT Authentication.src_user="*$" AND NOT Authentication.src_user="unknown" by host,Authentication.src,Authentication.src_user,_time

| eval host=upper(host)

| eval Serveur='Authentication.src'

| eval **** = upper(trim(('Authentication.src_user')))

| eval samaccountname=substr(trim(upper('Authentication.src_user')),1,7)

| eval domaine="****"

| lookup **** samaccountname as samaccountname domaine as domaine

| search email="*"

| eval **** = samaccountname

| table ****, ****, host, email,ua,cn,_time,cn

| join host type=left [| inputlookup *****.csv | eval host=Machine]

| where Ferme="****" OR Ferme="****" OR Ferme="*****" OR Ferme="*****" OR Ferme="****"

| stats values(Ferme) as Ferme values(_time) as _time by *****,cn

| stats count as "nb. de connexion par jour" by Ferme,Date

| eval epochDate=strptime(Date,"%d/%m/%Y")

| sort epochDate

| fields - epochDatein addition, it's unuseful to sort before a stats command.

Ciao.

Giuseppe

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

it's work, thank you @gcusello 🙂

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You did well to convert the Date field to epoch form before sorting. However, the stats command spoiled that work by re-sorting by the ferme field. Where the ferme field has repeated values, they are sorted lexicographically by Date.

I believe you can resolve the problem by putting the strftime call after the final stats.

If this reply helps you, Karma would be appreciated.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @numeroinconnu12 ,

to sort a date you have to transform them in epochtime, so, to sort your search:

| tstats summariesonly=t allow_old_summaries=t count from datamodel=Authentication.Authentication where [| inputlookup ****.csv | eval host=Machine | table host ] AND NOT Authentication.src_user="*$" AND NOT Authentication.src_user="unknown" by host,Authentication.src,Authentication.src_user,_time

| eval host=upper(host)

| eval Serveur='Authentication.src'

| eval **** = upper(trim(('Authentication.src_user')))

| eval samaccountname=substr(trim(upper('Authentication.src_user')),1,7)

| eval domaine="****"

| lookup **** samaccountname as samaccountname domaine as domaine

| search email="*"

| eval **** = samaccountname

| table ****, ****, host, email,ua,cn,_time,cn

| join host type=left [| inputlookup *****.csv | eval host=Machine]

| where Ferme="****" OR Ferme="****" OR Ferme="*****" OR Ferme="*****" OR Ferme="****"

| stats values(Ferme) as Ferme values(_time) as _time by *****,cn

| stats count as "nb. de connexion par jour" by Ferme,Date

| eval epochDate=strptime(Date,"%d/%m/%Y")

| sort epochDate

| fields - epochDatein addition, it's unuseful to sort before a stats command.

Ciao.

Giuseppe

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @numeroinconnu12 ,

good for you, see next time!

Ciao and happy splunking

Giuseppe

P.S.: Karma Points are appreciated by all the contributors 😉