Are you a member of the Splunk Community?

- Find Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- How to convert a date field with values as the num...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

How to convert a date field with values as the number of days counting from the year 2000 to a dd/mm/yy format?

Hello,

I'm currently doing a school project which requires me to monitor a database file using Splunk. However, the database file contains a column whereby the date is recorded down as something like 5687. After researching for days, I found out it is actually counting from the year 2000 and it's recorded down using days.

Is it possible to use Splunk and convert the date into dd/mm/yy format?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Assuming your column is being extracted as field how about this:

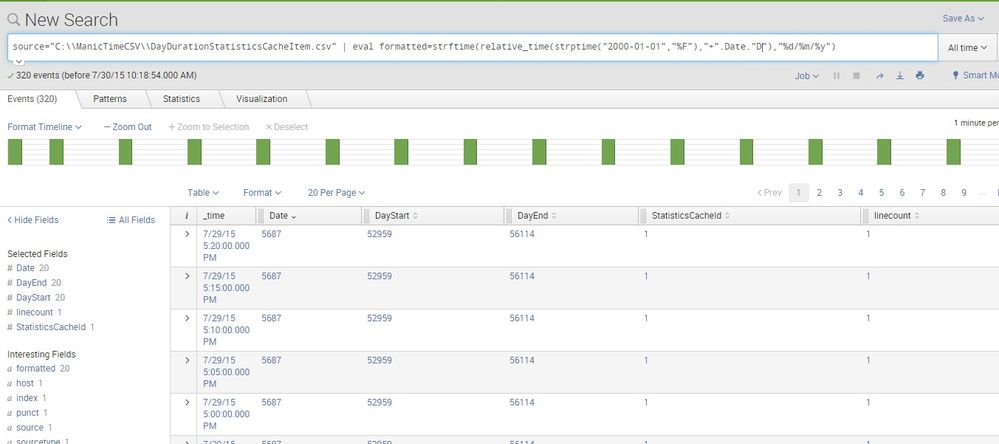

... | eval formatted=strftime(relative_time(strptime("2000-01-01","%F"),"+".field."d"),"%d/%m/%y")

The parts of this are:

1. strptime("2000-01-01","%F") -> Parse January 1st 2000 into the number of seconds since January 1st 1970 (Unix Epoch)

2. "+".field."d" -> Turn the field value into the relative time modifier to add the field number of days... e.g. "+5687d"

3. relative_time(<1>,<2>) -> adjust the timestamp found in 1 by the range built in 2

4. strptime(<3>,"%d/%m/%y") -> convert the adjusted timestamp of 3 back to dd/mm/yy format.

There are lots of other eval functions that you may want to reference and find helpful in the future.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You can use the table command to pick the fields you want in a tabular format, but from your image before the link broke, you were showing formatted as an extracted field on the left, and if you clicked the informational > next to an event would likely see it there as well.

Now I'll admit the instances I primarily work on are a couple versions behind, so I haven't seen the events view spitting out a tabular format like that before. Another possibility could be to try the fieldformat command instead of the eval command, and see if that plays with the Events view in your version or not.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

this is the pic that showing of what i trying to explain, thanks for the answer though . Will try to figure out how to make it work

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

and the moment i change formatted to Date, my whole row would be blank. Not sure why is this happening