- Splunk Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- How to calculate percent increase of crime by mont...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

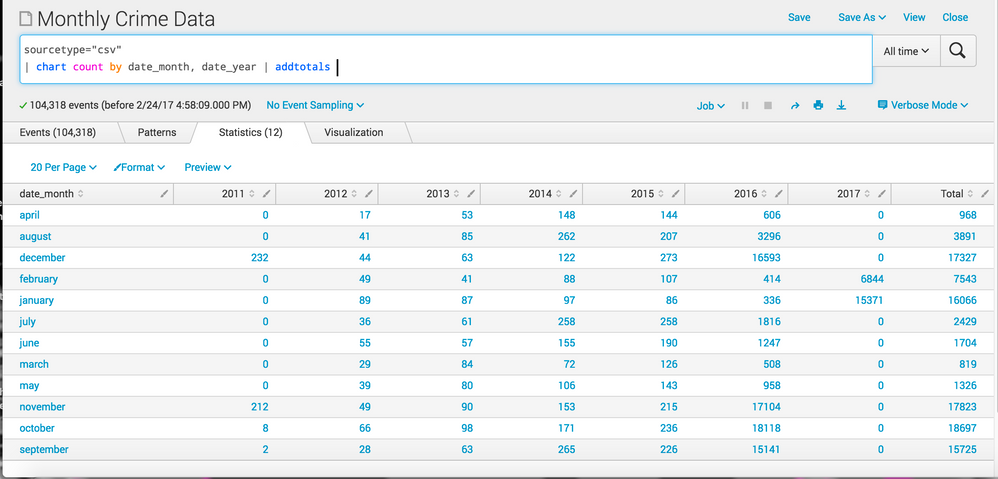

Hello, I'm new to Splunk and would appreciate any help.

I am trying to figure out what month had the largest percent increase in crime from 2011-2017.

I was able to list all the values out and add the totals but I'm not sure where to go from here.

Thank you for you help.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Give this a try

sourcetype=csv | stats count by date_month date_year

| appendpipe [| stats first(count) as from last(count) as to by date_month | eval count=round(to-from)*100/from,2) | eval date_year="PercentChange"| table date_month date_year count]

| appendpipe [| stats sum(count) as count by date_month | eval date_year="Total"| table date_month date_year count]

| xyseries date_month date_year count

The first line get count in following format

date_month, date_year, count

april, 2011, xxx

...

april,2017,yyy

..other months say way 2011 to 2017...

The first appendpipe takes the first count (for 2011) and last count (for 2017) and calculates % change. The column names are adjusted to follow the output of first line (date_month, date_year as label percent and count as percent value). Output of this is added to the end of the output so far.

date_month, date_year, count

..above output..

april, percentchange,zzzz

..similarly for other months...

The second appendpipe is to calculate total for the month. The columns names are again formatted to match the output of first line (date_month, date_year as label Total and count as total value). Output of this is added to the end of the output so far.

The xyseries will give output similar to chart command, where all date_month will come as row, all date_year values as column, include PercentChange and Total, and corresponding values will be populated.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Give this a try

sourcetype=csv | stats count by date_month date_year

| appendpipe [| stats first(count) as from last(count) as to by date_month | eval count=round(to-from)*100/from,2) | eval date_year="PercentChange"| table date_month date_year count]

| appendpipe [| stats sum(count) as count by date_month | eval date_year="Total"| table date_month date_year count]

| xyseries date_month date_year count

The first line get count in following format

date_month, date_year, count

april, 2011, xxx

...

april,2017,yyy

..other months say way 2011 to 2017...

The first appendpipe takes the first count (for 2011) and last count (for 2017) and calculates % change. The column names are adjusted to follow the output of first line (date_month, date_year as label percent and count as percent value). Output of this is added to the end of the output so far.

date_month, date_year, count

..above output..

april, percentchange,zzzz

..similarly for other months...

The second appendpipe is to calculate total for the month. The columns names are again formatted to match the output of first line (date_month, date_year as label Total and count as total value). Output of this is added to the end of the output so far.

The xyseries will give output similar to chart command, where all date_month will come as row, all date_year values as column, include PercentChange and Total, and corresponding values will be populated.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

hey somesoni2

in the second appendpipe, when you calculate the totals, I think you are also including the figures from the first appendpipe, and then the final figure is wrong.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you! There was an error with the eval command but I was able to fix it and I also added:

| fieldformat Percent=tostring(Percent) + "%"

to format "Percent".

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

hello,

@Kassandral, could you please tell my how do you fixe the Eval error ?

Thanks a lot,

Romain

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The eval is missing an open parenthesis...

| eval count=round((to-from)*100/from,2)