Join the Conversation

- Find Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- Comparing Multivalue fields by percentage

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have customer names assigned to servers in multivalue fields.

Sample data below:

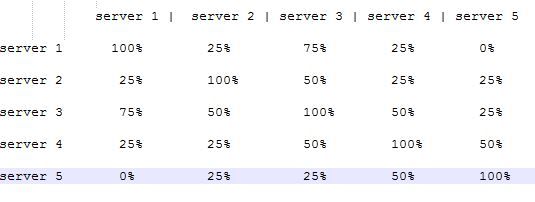

I need to be able to compare the data across all the mv fields and create a matrix with the server name on both the X/Y axis and show the match percentage.

Example expected output:

My current search is: ...| stats values(Customer) dc(Customer) AS customer_count by Server

which just creates my MV fields for each Server.

I am completely at a loss where to take it from here or if it is even possible. Being that this is Splunk, it probably is, i just lack the juju to make it happen.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This was another very interesting challenge!

To start out, this part fakes some data so we can test:

| noop | stats count AS Customer

| eval Server="Server 1,Server 2,Server 3,Server 4,Server 5" | makemv delim="," Server | mvexpand Server

| eval Customer=case((Server="Server 1"), "A,D,C,Z",

(Server="Server 2"), "B,R,D,A",

(Server="Server 3"), "C,D,A,Z",

(Server="Server 4"), "D,B,C,Z",

(Server="Server 5"), "Z,B,Y,Q")

| makemv delim="," Customer | mvexpand Customer

Now for the MAGIC!

First build a contingency table as our initial matrix of Server-to-Customer (this is the same as | chart count BY Server Customer | addtotals col=t row=t labelfield=Server but many people have not seen the contingency command in action so this is as good a place as any to showcase it):

| contingency Server Customer | fields - TOTAL | search Server!="TOTAL"

Next, level out the numbers so that each cell in the matrix has either a 0 or a 1:

| foreach * [eval <<FIELD>> = case(NOT isnum($<<FIELD>>$), $<<FIELD>>$,

($<<FIELD>>$ > 0), 1,

true(), 0)]

At this point, we have data that looks like this:

Server A B C D Q R Y Z

Server 5 0 1 0 0 1 0 1 1

Server 4 0 1 1 1 0 0 0 1

Server 3 1 0 1 1 0 0 0 1

Server 2 1 1 0 1 0 1 0 0

Server 1 1 0 1 1 0 0 0 1

Now flatten the many Server* columns into a single field called bitmap and save the results:

| eval bitmap=":"

| foreach * [ eval bitmap = bitmap . if(isnum($<<FIELD>>$), $<<FIELD>>$, ":")] | rex field=bitmap mode=sed "s/://g"

| rex field=bitmap mode=sed "s/(.)/\\1,/g" | makemv delim="," bitmap

| fields Server bitmap | outputcsv eraseme.csv

At this point, we have data that looks like this:

Server bitmap

Server 5 0

1

0

0

1

0

1

1

Server 4 0

1

1

1

0

0

0

1

Server 3 1

0

1

1

0

0

0

1

Server 2 1

1

0

1

0

1

0

0

Server 1 1

0

1

1

0

0

0

1

Lastly, iterate over each row (each Server) using map and do the "magic" on each row in the temp file:

| map maxsearches=10000 search="|inputcsv eraseme.csv | makemv delim=\",\" bitmap

| eval \"$Server$\"=$bitmap$

| makemv delim=\",\" \"$Server$\"

| rename $Server$ AS noSpacesServerName

| eval noSpacesServerName = mvzip(noSpacesServerName, bitmap, \"\")

| fields - bitmap

If we take a look at the first iteration at this point (as though we had added a | search Server="Server5" before the map call), the data looks like this:

Server Server 5

Server 5 00

11

00

00

11

00

11

11

Server 4 00

11

01

01

10

00

10

11

Server 3 01

10

01

01

10

00

10

11

Server 2 01

11

00

01

10

01

10

10

Server 1 01

10

01

01

10

00

10

11

You can easily see what comes next: we just have to count some things in the merged (and renamed) bitmap (the number of "11" values and the number of "*1" values) and do the math:

| eval $Server$ = 100 * mvcount(mvfilter(like(noSpacesServerName, \"11\"))) / mvcount(mvfilter(like(noSpacesServerName, \"%1\")))

| fields - noSpacesServerName"

| stats values(*) AS * BY Server

The only downside is that this is limited to 10K distinct values of Server because map uses subsearches.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As map has some well known limitations, including kicking off a bunch of parallel searches at a time (default max is 10), I figured I'd come up with a non- map solution to this.

First you say you're doing ... | stats values(Customer) dc(Customer) AS customer_count by Server into this point... I start with the assumption that you're instead doing ... | stats count by Customer, Server (we don't care about count, just the pairs of Customer and Server)

Using a combination of makeresults command (If you're on Splunk 6.3+, otherwise use |noop|stats count|fields for the same fake one result), and the multikv command, we can mock up to that state like this:

|makeresults |eval _raw="count, Customer, Server

2, A, server 1

2, D, server 1

2, Z, server 1

2, C, server 1

2, B, server 1

2, B, server 2

2, R, server 2

2, D, server 2

2, A, server 2

2, C, server 3

2, D, server 3

2, A, server 3

2, Z, server 3

2, D, server 4

2, B, server 4

2, C, server 4

2, Z, server 4

2, Z, server 5

2, B, server 5

2, Y, server 5

2, Q, server 5" |multikv forceheader=1 |foreach * [eval <<FIELD>>=trim(<<FIELD>>)] |table count,Customer,Server

(Ok, I could have gotten rid of the spaces in my fake results, and thus gotten rid of the foreach command but style... also I added a customer B to server 1, because how often are things always completely even 🙂 )

Now the other solution:

( ... | stats count by Customer, Server )

| eval key=Customer."::".Server | eventstats values(key) as key | mvexpand key

| eval key=split(key,"::"), OtherCustomer=mvindex(key,0), OtherServer=mvindex(key,1)

| stats count(eval(Customer==OtherCustomer)) as match dc(Customer) as total by Server, OtherServer

| eval percent=round(match/total*100)

| xyseries Server, OtherServer, percent

First we want the cross product of all customer server pairs to all other customer server pairs. So the first line of the solution we use eval to combine the values of Customer and Server into a single field (the delimiter :: must not appear in either customer or server so choose another string if need be). Using eventstats and mvexpand we complete the cross product (you now have the square of the number of results, every server customer pair crossed with every other server customer pair).

On the second line, we use eval again to split the key into fields again (obviously adjust the delimiter in the split eval function if you needed to adjust it in the first line). This is using Splunk 6.3+ syntax, if you are on 6.2 or earlier, you would just have a single eval per field instead of multiple fields separated by commas, i.e. | eval key=split(key,"::") | eval OtherCustomer=mvindex(key,0) | eval OtherServer=mvindex(key,1)

Now the magic 3rd line... for every pair of Server and Other Server, we want the number of customers that match in the cross product, as well as the number of customers for the current server. count matching a particular condition with eval is a handy trick to solve this for us. With this information, calculating percentage is easy on the 4th line.

Finally on the 5th line we take the data of Server -> Other Server -> percentage and present it in a tabular form as requested using xyseries. As your example set is perfectly symmetrical over the diagonal of the table it's hard to tell if you want this or xyseries OtherServer Server percent depending on which way you want your data presented.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Excellent. I had never realized it before but map and eventstats serve a nearly identical purpose and probably anything that can be done with one can be reworked to be done with the other!

P.S. In your solution, I would have used this:

| rex field=key "^(?<OtherCustomer>.*?)::(?<OtherServer>.*?)$"

instead of this:

| eval key=split(key,"::"), OtherCustomer=mvindex(key,0), OtherServer=mvindex(key,1)

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks Gregg. I validated the data this morning and it looks great. Thanks for the help!

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

So, some points of clarification on your sample data? Server 1 appears to have results for customers A, C, D and Z. Server 3 also has results for customers A, C, D, and Z. Why is there a 75% in the percentage chart for server1 x server3 and server 3 x server 1? Is there a bug in your sample, or am I misunderstanding what you are trying to accomplish?

Before your stats, what does your source data actually look like? Do you need multivalue fields, or is this just how what you have so far has worked out?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, his data does not match his mockedup output. The actual output should be this:

Server Server 1 Server 2 Server 3 Server 4 Server 5

Server 1 100 50 100 75 25

Server 2 50 100 50 50 25

Server 3 100 50 100 75 25

Server 4 75 50 75 100 50

Server 5 25 25 25 50 100

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This was another very interesting challenge!

To start out, this part fakes some data so we can test:

| noop | stats count AS Customer

| eval Server="Server 1,Server 2,Server 3,Server 4,Server 5" | makemv delim="," Server | mvexpand Server

| eval Customer=case((Server="Server 1"), "A,D,C,Z",

(Server="Server 2"), "B,R,D,A",

(Server="Server 3"), "C,D,A,Z",

(Server="Server 4"), "D,B,C,Z",

(Server="Server 5"), "Z,B,Y,Q")

| makemv delim="," Customer | mvexpand Customer

Now for the MAGIC!

First build a contingency table as our initial matrix of Server-to-Customer (this is the same as | chart count BY Server Customer | addtotals col=t row=t labelfield=Server but many people have not seen the contingency command in action so this is as good a place as any to showcase it):

| contingency Server Customer | fields - TOTAL | search Server!="TOTAL"

Next, level out the numbers so that each cell in the matrix has either a 0 or a 1:

| foreach * [eval <<FIELD>> = case(NOT isnum($<<FIELD>>$), $<<FIELD>>$,

($<<FIELD>>$ > 0), 1,

true(), 0)]

At this point, we have data that looks like this:

Server A B C D Q R Y Z

Server 5 0 1 0 0 1 0 1 1

Server 4 0 1 1 1 0 0 0 1

Server 3 1 0 1 1 0 0 0 1

Server 2 1 1 0 1 0 1 0 0

Server 1 1 0 1 1 0 0 0 1

Now flatten the many Server* columns into a single field called bitmap and save the results:

| eval bitmap=":"

| foreach * [ eval bitmap = bitmap . if(isnum($<<FIELD>>$), $<<FIELD>>$, ":")] | rex field=bitmap mode=sed "s/://g"

| rex field=bitmap mode=sed "s/(.)/\\1,/g" | makemv delim="," bitmap

| fields Server bitmap | outputcsv eraseme.csv

At this point, we have data that looks like this:

Server bitmap

Server 5 0

1

0

0

1

0

1

1

Server 4 0

1

1

1

0

0

0

1

Server 3 1

0

1

1

0

0

0

1

Server 2 1

1

0

1

0

1

0

0

Server 1 1

0

1

1

0

0

0

1

Lastly, iterate over each row (each Server) using map and do the "magic" on each row in the temp file:

| map maxsearches=10000 search="|inputcsv eraseme.csv | makemv delim=\",\" bitmap

| eval \"$Server$\"=$bitmap$

| makemv delim=\",\" \"$Server$\"

| rename $Server$ AS noSpacesServerName

| eval noSpacesServerName = mvzip(noSpacesServerName, bitmap, \"\")

| fields - bitmap

If we take a look at the first iteration at this point (as though we had added a | search Server="Server5" before the map call), the data looks like this:

Server Server 5

Server 5 00

11

00

00

11

00

11

11

Server 4 00

11

01

01

10

00

10

11

Server 3 01

10

01

01

10

00

10

11

Server 2 01

11

00

01

10

01

10

10

Server 1 01

10

01

01

10

00

10

11

You can easily see what comes next: we just have to count some things in the merged (and renamed) bitmap (the number of "11" values and the number of "*1" values) and do the math:

| eval $Server$ = 100 * mvcount(mvfilter(like(noSpacesServerName, \"11\"))) / mvcount(mvfilter(like(noSpacesServerName, \"%1\")))

| fields - noSpacesServerName"

| stats values(*) AS * BY Server

The only downside is that this is limited to 10K distinct values of Server because map uses subsearches.