- Splunk Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- Re: Calculating utilization of nodes connected in ...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Calculating utilization of nodes connected in Tree network topology

I have been busting my brain on this for a few weeks with no clear solution, turning to the brainiacs in the Splunk community for help now.

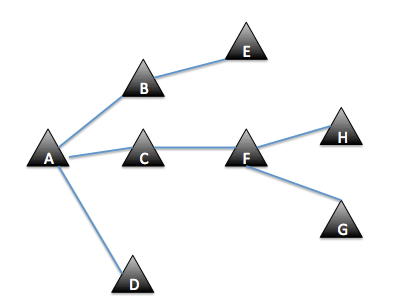

I have bandwidth utilization data for a few thousand nodes of telecom stations. They are all connected in a tree network topology. For clearer understanding what is tree topology, image below is an example. A subnetwork begins when node A which is connected to a backhaul branches off links to child nodes. At any point in the branches, other sub-branches may branch off as well.

We are trying to build a dashboard of utilization data of the links between nodes. The challenge is my data is hourly utilization for individual nodes, but utilization data for each links need to accumulate the utilization for all nodes under it. Based on this example, utilization for link A-C is total of utilization of nodes C+F+H+G.

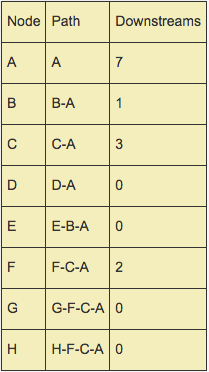

I have a lookup that provides the details for each node in this form:

My objective is to build

1) a scheduled search that is able to iterate over multiple trees to calculate utilization of each link (around 7k+ links total). Utilization of a link is total utilization of all its subnodes.

2) an interactive dashboard where the user can input the name of the link (e.g A-B or F-H) and it will calculate the current utilization of a link

Anyone can help? Your wildest ideas are accepted.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I hate to make it sound trivial, but how about setting up a system that flattens the network into summary records. Any node Y whose traffic needs to get added to the summary of node X gets a record creates X - Y. Then traffic is the equivalent of a simple join of the traffic to the right side of the records, and the records are summarized based on the value on the left.

A A

A B

A C

A D

A E

A F

A G

A H

B B

B E

C C

C F

C G

C H

D D

E E

F F

G G

H H

Thus, you don't need to iterate over anything at search and presentation time.

As far as building the flattened version, I believe there is a simple way to do that, too. First, produce the initial network as a single node-to-node flat file...

| makeresults

| eval mydata="(A,A);(A,B);(A,C);(A,D);(B,B);(B,E);(C,C);(C,F);(D,D);(E,E);(F,F);(F,H);(F,G);(G,G);(H,H)"

| makemv delim=";" mydata | mvexpand mydata

| rex field=mydata "\((?<node1>\w),(?<node2>\w)"

| table node1 node2

| outputcsv test1.csv

...then run this search repeatedly, some number of times slightly over the square root of the highest node depth in your network....

| inputcsv test1.csv

| join type=left max=0 node2 [ |inputcsv test1.csv | rename node2 as node3 | rename node1 as node2]

| eval node2=coalesce(node3,node2)

| fields - node3

| inputcsv append=t test1.csv

| dedup node1 node2

| outputcsv append=f test1.csv

In this case, twice.

You might have to break it up a little bit, if the number of edges gets bigger than the 50K number allowed by a subsearch. If so, you may be able to get around that by appropriately defining the csv as a lookup, which you probably want to do anyway, but one that returns up to a thousand results. Alternatively, you could use the lookup as a lookup to suppress any results that are already present in the lookup file, so that the subsearch limits would only apply to limit each run to adding 50K new edges, rather than the total number of calculated edges.

For testing the above, this generates some random traffic...

| makeresults | eval mydata="A B C D E F G H" | makemv mydata| mvexpand mydata

| eval mytimes=mvrange(relative_time(now(),"@m-15m"),now(),60)

| mvexpand mytimes

| eval _time=mytimes

| eval traffic= if(mydata="C" OR mydata="D" OR mydata="G" OR mydata="H",

(random() %37) + 2*(random() %29),

(random() %37) + 2*(random() %29) + 3* (random() % 57))

| sort 0 _time mydata

| rename mydata as node

| table _time node traffic

| outputcsv test2.csv

And here's one of the many possible ways to then produce the report

| inputcsv test1.csv

| join max=0 node2 [| inputcsv test2.csv | rename node as node2 | rename _time as Time | table Time node2 traffic ]

| fields Time node1 node2 traffic

| rename Time as _time

| appendpipe [ | stats sum(traffic) as traffic by _time node1 | eval node2="Total"]

| sort 0 _time node1

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

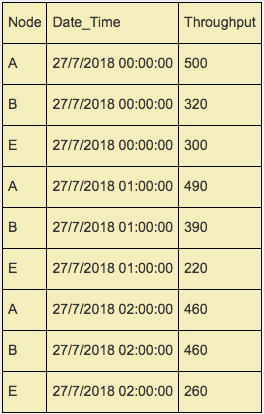

Thanks FrankVI for your attention to this question. Somehow I can't attach an image in a comment direct to you, so I'm attaching this example of the bandwidth data in an answer.

The bandwidth data looks like this for a sample of nodes A,B and E from the time period of 27/7/2018 12AM to 2AM. Note that I'm selecting these particular nodes as they are in 1 subtree A-B-E, the full set of data will have bandwidth utilization for all nodes at every hour.

Referring back to the tree structure knowledge, let's have some calculation scenarios:

A) utilization for the link that connects A and B at 2AM

- I will sum up bandwidth utilization (aka Throughput) for the subnodes under it, B and E, which amounts to 460+260=720.

B) utilization for the link that connects B and E at 2AM

- Since there is only 1 subnode under this link (E), the utilization for this link is just the utilization for E, which is 260.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I can fix comments.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you provide samples / screenshots of how the data is stored in splunk? Both the bandwidth data and the tree structure knowledge.