- Splunk Answers

- :

- Using Splunk

- :

- Reporting

- :

- Could someone give more details on the report acce...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

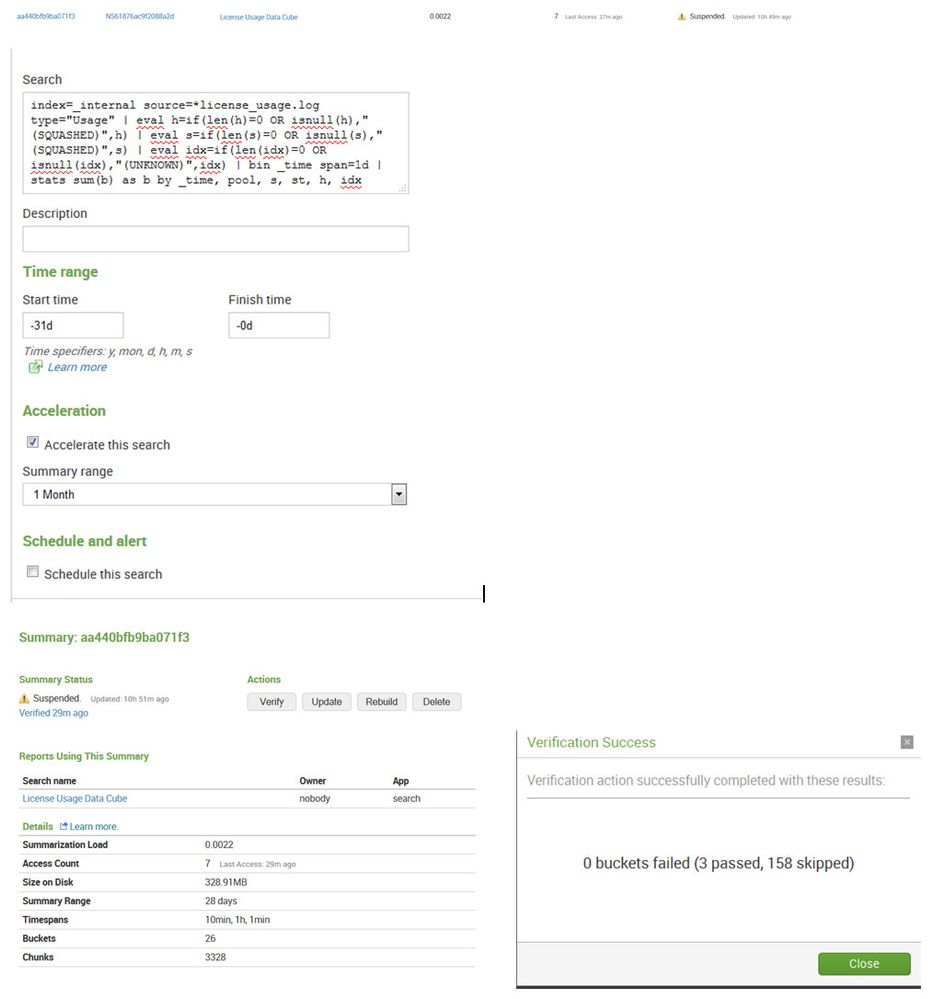

Could someone give more details on the report acceleration verify summary messaging?

I turned on acceleration for the report "License Usage Data Cube" included on the the License Usage page, however when I verify the summary, I'm not clear on the message details.

How do I get the failed reason?

How do I get the skipped reason? (I know events must be 100k per bucket to qualify, but how do I know if this was the reason for skipping?)

If you go to settings, Report acceleration summaries and click on the the summary id link and then click verify, thorough verification. Let that run then click on the link for summary status.

96% Complete Updated: 7m ago

Failed to verify < 1 min ago

Clicking on the link I see:

103 buckets failed (5 passed, 38 skipped)

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Would this be a valid search to check for eligibility?

index=_internal source=*license_usage.log type="Usage"

| eval bucket_event_id=_cd

| rex field=bucket_event_id "(?<bucket_id>[^:]+):"

| stats count as eligible_event_count by index splunk_server bucket_id

| join [ search index=_internal

| eval bucket_event_id=_cd

| rex field=bucket_event_id "(?<bucket_id>[^:]+):"

| stats count as bucket_event_count by index splunk_server bucket_id ]

| eval eligible_event_pct=eligible_event_count/bucket_event_count

Is this the same ratio as auto_summarrize.max_summary_ratio ?

auto_summarize.max_summary_ratio = <positive float>

* The maximum ratio of summary_size/bucket_size when to stop summarization and deem it unhelpful for a bucket

* NOTE: the test is only performed if the summary size is larger than auto_summarize.max_summary_size

* Defaults to: 0.1

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As report acceleration is touted as the "easy button" as compared to traditional summary indexes, I strongly believe that the messaging on the Report Acceleration Summaries page should be updated to give exact reasons for failure or skipping of buckets and suspension of the summary.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

How do I get the skipped reason? (I

know events must be 100k per bucket to

qualify, but how do I know if this was

the reason for skipping?)

Given that verification amounts to computing the summary again and comparing with what's already present (ie it's pretty expensive) we randomly pick buckets to verify, otherwise if we picked all we'd mind as well rebuild the summary.

Regarding the suspended status - we suspend report acceleration if the size of a summary is above a certain threshold (summary size / bucket size > threshold)

look at the following entries in savedsearches.conf.spec

auto_summarize.max_summary_size = <unsigned int>

- The minimum summary size when to start testing it's helpfulness

- Defaults to 52428800 (5MB)

auto_summarize.max_summary_ratio = <positive float>

- The maximum ratio of summary_size/bucket_size when to stop summarization and deem it unhelpful for a bucket

- NOTE: the test is only performed if the summary size is larger than auto_summarize.max_summary_size

- Defaults to: 0.1

auto_summarize.max_disabled_buckets = <unsigned int>

- The maximum number of buckets with the suspended summarization before the summarization search is completely

- stopped and the summarization of the search is suspended for auto_summarize.suspend_period

- Defaults to: 2

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Setting this to a lower number appears to get rid of the suspension. As a test, I set it to "1". I'm working on creating a search that will tell me the ratio of events to the events in the bucket. http://docs.splunk.com/Documentation/Splunk/6.2.2/Admin/Limitsconf

in limits.conf

[summarize]

hot_bucket_min_new_events =

* The minimum number of new events that need to be added to the hot bucket (since last summarization)

* before a new summarization can take place. To disable hot bucket summarization set this value to a

* large positive number.

* Defaults to 100000

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Does skipped mean skipped bucket verification or skipped as in bucket does not qualify?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Report acceleration summary verification is documented here: http://docs.splunk.com/Documentation/Splunk/6.2.2/Knowledge/Manageacceleratedsearchsummaries#Verify_...

Report acceleration summaries fail verification when the data they contain is inconsistent, meaning that the newer data in the summary has fundamental differences from the older data in the summary. This can happen when (sometimes quite subtle) changes are made to components of the base search, such as a change to the definition of a tag or event type. In your case it could be that the wildcarded source=*license_usage.log is finding data from a wider range of log files now than it did originally.

If you're certain that the base search is working properly you can rebuild the summary and then verify the rebuilt summary. You may get better results.

As for skipped buckets: the verification process skips buckets that are hot, as well as buckets that are in the process of being built.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I picked the license usage log report since it's included with Splunk out of the box and it has a note to accelerate in the UI. I'm having trouble with other report accelerations as well, however I wanted to start with something simple.

The report seems to run normally and give proper results.

index=_internal source=*license_usage.log type="Usage" | eval h=if(len(h)=0 OR isnull(h),"(SQUASHED)",h) | eval s=if(len(s)=0 OR isnull(s),"(SQUASHED)",s) | eval idx=if(len(idx)=0 OR isnull(idx),"(UNKNOWN)",idx) | bin _time span=1d | stats sum(b) as b by _time, pool, s, st, h, idx

Is there any way to increase the logging level, etc have Splunk tell me why it's skipping or failing? Otherwise it seems like a whole bunch of guessing and trial and error.

It's very strange to me that it says the summary is being accessed yet the status is suspended.

10 Last Access: < 1 min ago Suspended. Updated: 13h 15m ago

Search name Owner App

License Usage Data Cube nobody search

Details

Learn more.

Summarization Load 0.0020

Access Count 10 Last Access: 5m ago

Size on Disk 1352.61MB

Summary Range 28 days

Timespans 10min, 1d, 1h, 1min

Buckets 92

Chunks 16316

Just ran the thorough verify again:

70 buckets failed (3 passed, 96 skipped)

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Bueller, Bueller, Bueller...