- Splunk Answers

- :

- Using Splunk

- :

- Splunk Dev

- :

- Custom streaming search command error

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Created a custom streaming command that concatenates an event's fields and field values into one field (since the events that we're dealing with has an unpredictable list of fields, I couldn't figure out a way to do it in SPL).

When ran in a stand-alone Splunk Enterprise instance, it works fine. However, when ran in a clustered environment, it results in an error (one message per indexer node):

[<indexer hostname>] Streamed search execute failed because: Error in 'condensefields' command: External search command exited unexpectedly with non-zero error code 1..

I have the app that contains the custom command in both the search heads and indexers.

Setup:

- Oracle Linux Server 7.8

- Splunk Enterprise 7.2.6

Search Example:

index=_audit

| condensefields _time, user, action, info, _raw

| table _time, user, action, info, details

App (was not able to upload compressed folder):

- <app>

- bin

- condensefields.py Spoiler#!/usr/bin/env pythonimport sysimport ossys.path.insert(0, os.path.join(os.path.dirname(__file__), "..", "lib"))from splunklib.searchcommands import \dispatch, StreamingCommand, Configuration, Option, validators@Configuration()class CondenseFields(StreamingCommand):""" Condense fields of an event into one field.##Syntax| condensefields <fields>##DescriptionCondenses all of the fields, except ignored fields, from the event into one field in a key-value format."""def stream(self, events):for event in events:fields_to_condense = filter(lambda key: key not in self.fieldnames, event.keys())condensed_str = ''is_first = Truefor key in fields_to_condense:value = event[key]if not value or len(value) == 0:continueif not is_first:condensed_str += '|'else:is_first = Falseif isinstance(value, list):value = '[\'' + '\', \''.join(value) + '\']'condensed_str += key + '=' + valueevent['details'] = condensed_stryield eventdispatch(CondenseFields, sys.argv, sys.stdin, sys.stdout, __name__)

- condensefields.py

- default

- app.conf Spoiler[install]is_configured = falsebuild = 1[ui]is_visible = falselabel = commands[launcher]author = Some Randodescription = Provides custom commands.version = 1.0.0

- commands.conf Spoiler# [commands.conf]($SPLUNK_HOME/etc/system/README/commands.conf.spec)[condensefields]chunked = true

- searchbnf.conf Spoiler# [searchbnf.conf](http://docs.splunk.com/Documentation/Splunk/latest/Admin/Searchbnfconf)[condensefields-command]syntax = condensefieldsshortdesc = Condense fields of an event into one field.description = Condenses all of the fields, except ignored fields, from the event into one field in a key-value format.content1 = A typical use-case where all of the fields, except for a defined subset, are condensed into the a field with the specified format.example1 = | condensefields _time, event_name, applicationcategory = streamingtags = format

- app.conf

- lib

- splunklib

- metadata

- default.meta Spoiler

[]

access = read : [ * ], write : [ admin, power ]

export = system

- default.meta

- bin

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @GindiKhangura ,

I was able to replicate this to my environment, with the app as attached. It worked great on the standalone node, or if i used a non-streaming command in the search before the streaming command (head 1000), but as soon as I streamed it across the indexers, I received the same error.

I was able to look into the search.log from the search on one of the indexers, and found the trace.

10-08-2020 17:53:52.554 ERROR ChunkedExternProcessor - stderr: Traceback (most recent call last):

10-08-2020 17:53:52.554 ERROR ChunkedExternProcessor - stderr: File "/opt/splunk/var/run/searchpeers/E138C522-1210-4D6F-AD0B-C91FDB3E95D8-1602178084/apps/testapp/bin/condensefields.py", line 7, in <module>

10-08-2020 17:53:52.554 ERROR ChunkedExternProcessor - stderr: from splunklib.searchcommands import \

10-08-2020 17:53:52.555 ERROR ChunkedExternProcessor - stderr: ImportError: No module named splunklib.searchcommands

10-08-2020 17:53:52.556 ERROR ChunkedExternProcessor - EOF while attempting to read transport header

10-08-2020 17:53:52.556 ERROR ChunkedExternProcessor - Error in 'condensefields' command: External search command exited unexpectedly with non-zero error code 1.

10-08-2020 17:53:52.556 ERROR SearchPipelineExecutor - sid:remote_sh1_1602179632.20_86CF40A2-33DE-4558-8481-6CFF1E8B36D4 Streamed search execute failed because: Error in 'condensefields' command: External search command exited unexpectedly with non-zero error code 1..

Looking at the error "10-08-2020 17:53:52.555 ERROR ChunkedExternProcessor - stderr: ImportError: No module named splunklib.searchcommands". You'll also notice that the app itself is being passed in the knowledge bundle and being ran in /opt/splunk/var/run/searchpeers/E138C522-1210-4D6F-AD0B-C91FDB3E95D8-1602178084/apps/testapp/bin/condensefields.py and NOT /opt/splunk/etc/slave-apps/testapp/bin/condensefields.py

I looked into that /var/run/searchpeers/<SH_GUID>-<SID>/apps/testapp/ directory, and /lib/ does not get streamed to the indexer, but /bin does.

To resolve this issue, I moved splunklib from /lib to /bin and removed the syspath for it to point into its own directory.

After this change, the command was able to stream properly.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Not sure if your old listed version of Splunk 7.2.6 can support it, hoping you've updated since its unsupported as of April 2021 and this thread is just over a year old at my time of writing.

I'm writing because I never saw the appropriate answer added.

Since your CSC is a streaming command, you can set a replication whitelist in $SPLUNK_HOME/etc/apps/app_name/default/distsearch.conf to include the required libraries. This will allow your indexers to have a copy, fixing the error, and removing any need for the inappropriate copying of splunklib into the bin directory.

Happy Holidays and Happy Splunking

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Looks promising, thanks!

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @GindiKhangura ,

I was able to replicate this to my environment, with the app as attached. It worked great on the standalone node, or if i used a non-streaming command in the search before the streaming command (head 1000), but as soon as I streamed it across the indexers, I received the same error.

I was able to look into the search.log from the search on one of the indexers, and found the trace.

10-08-2020 17:53:52.554 ERROR ChunkedExternProcessor - stderr: Traceback (most recent call last):

10-08-2020 17:53:52.554 ERROR ChunkedExternProcessor - stderr: File "/opt/splunk/var/run/searchpeers/E138C522-1210-4D6F-AD0B-C91FDB3E95D8-1602178084/apps/testapp/bin/condensefields.py", line 7, in <module>

10-08-2020 17:53:52.554 ERROR ChunkedExternProcessor - stderr: from splunklib.searchcommands import \

10-08-2020 17:53:52.555 ERROR ChunkedExternProcessor - stderr: ImportError: No module named splunklib.searchcommands

10-08-2020 17:53:52.556 ERROR ChunkedExternProcessor - EOF while attempting to read transport header

10-08-2020 17:53:52.556 ERROR ChunkedExternProcessor - Error in 'condensefields' command: External search command exited unexpectedly with non-zero error code 1.

10-08-2020 17:53:52.556 ERROR SearchPipelineExecutor - sid:remote_sh1_1602179632.20_86CF40A2-33DE-4558-8481-6CFF1E8B36D4 Streamed search execute failed because: Error in 'condensefields' command: External search command exited unexpectedly with non-zero error code 1..

Looking at the error "10-08-2020 17:53:52.555 ERROR ChunkedExternProcessor - stderr: ImportError: No module named splunklib.searchcommands". You'll also notice that the app itself is being passed in the knowledge bundle and being ran in /opt/splunk/var/run/searchpeers/E138C522-1210-4D6F-AD0B-C91FDB3E95D8-1602178084/apps/testapp/bin/condensefields.py and NOT /opt/splunk/etc/slave-apps/testapp/bin/condensefields.py

I looked into that /var/run/searchpeers/<SH_GUID>-<SID>/apps/testapp/ directory, and /lib/ does not get streamed to the indexer, but /bin does.

To resolve this issue, I moved splunklib from /lib to /bin and removed the syspath for it to point into its own directory.

After this change, the command was able to stream properly.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@christopherrobe, I want to start by thanking you for this info! I spent a lot of time trying to figure out what was going on with my app in our production instance (clustered) that was completely fine in my local standalone instance. Like you, I noticed that when using a non-streaming command, like `noop` or `head`, before my CSC that this worked fine in the clustered instance.

There are a few things however that make me concerned about putting splunklib under /bin.

First, AppInspect throws a warning if you move the splunklib folder under /bin:

{

"description": "Check splunklib dependency should not be placed under app's bin folder. Please refer to\n https://dev.splunk.com/view/SP-CAAAER3 and https://dev.splunk.com/view/SP-CAAAEU2 for more details/examples.",

"messages": [

{

"code": "\"splunklib is found under `bin` folder, this may cause some dependency management \"",

"filename": "check_application_structure.py",

"line": 228,

"message": "splunklib is found under `bin` folder, this may cause some dependency management errors with other apps, and it is not recommended. Please follow examples in Splunk documentation to include splunklib. You can find more details here: https://dev.splunk.com/view/SP-CAAAEU2 and https://dev.splunk.com/view/SP-CAAAER3",

"result": "warning",

"message_filename": null,

"message_line": null

}

],

"name": "check_splunklib_dependency_under_bin_folder",

"tags": [

"splunk_appinspect",

"cloud",

"private_app"

],

"result": "warning"

},

The second concern is that the guide at https://dev.splunk.com/enterprise/docs/developapps/appanatomy/#Considerations-for-Python-code-files also instructs the user to put splunklib under /lib.

Since the AppInspect message tells you there may be dependency issues putting splunklib in /bin, yet it appears this is necessary for streaming CSCs to work in clustered environments, is there some underlying issue that needs to be addressed?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

What's said here is spot on. The best practices on SPL2 and AppInspect warn against doing this, yet it's the only way to get things working.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you, that worked.

I didn't check the search log on the indexer, was only looking at the one on the search head from the job inspector.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Awesome!

I also would like to note that you're able to get search.logs from the indexers as well in the job inspector under "Search Job Properties". Towards the bottom, under "Additional Info" there should be a link for each search.log from its respective indexer.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Have you looked at the search log to see the reason for the error?

If this reply helps you, Karma would be appreciated.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, I have looked at the search.log file from the Job Inspector.

I searched for the terms "error", "warn", and "fatal", but none of them were present. I read through the whole log and did not see any messages that would suggest that anything failed.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

When debugging my external command, I found it most useful to search for the command name rather than "error" and the like. IIRC, tracebacks were not reported as errors.

Also, consider using debug logging

|noop log_DEBUG=*If this reply helps you, Karma would be appreciated.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for the tip.

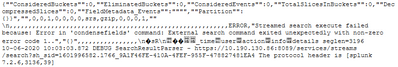

I tried it out, but no luck in finding the actual cause of the error. The message that I see in the search.log is the same as the one that is seen in the Search app. It appears to just fail with that one message:

(That message is a part of a much larger debug message from the SearchResultParser)

The error code is confusing and strange as it shows up as "1.."; wondering if that's supposed to be equivalent to "1xx"?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm not sure why the command is failing.

Have you tried doing the job using SPL?

| eval details = "_time="._time." user=".user." action=".action." info=".info." _raw="._rawIf this reply helps you, Karma would be appreciated.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You mean completely getting rid of the command and doing it via SPL?

The query that I provided in this thread was just meant to be a short and simple example that is able to reproduce the issue; the actual use-case for the command is to take all fields in an event, excluding the ones specified in the command's fields args, and concatenating them into one field. Since the events that I'm dealing with can have a random set of fields that are not predictable (I cannot keep a list of them), I was not able to figure out a way to do it via SPL (including macros).

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for clarifying that. Yes, I meant to use SPL instead of the external command, but nothing I can come with does what you want.

I'm out of ideas. Sorry.

If this reply helps you, Karma would be appreciated.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for trying!