- Splunk Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- Re: Data loss after shutdown Splunk

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Data loss after shutdown Splunk

Hi, I used "Add Data: Files and Directories" function to add a 200MB csv file from my hard drive into Splunk Enterprise 8.0.2 (Trial Version, MacOS). In order to do that, I configured it with a custom sourcetype and a custom index called "bigdbook".

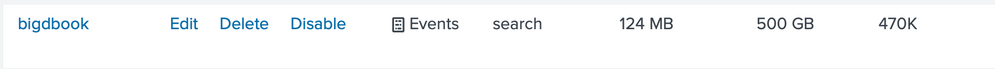

As the result, the file was uploaded successfully. I checked again by looking in the Settings > Data: Indexes section and saw an increment at the value of the current size column, the value was 124MB.

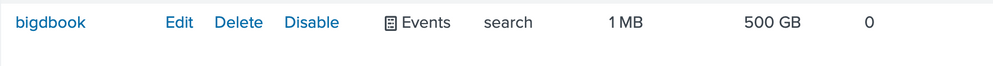

But after I shut down Splunk and started it back on, then I did several searches and found out that the data that I inputted was completely gone as the current size column's value in the indexes section returned to the default value 1MB.

So far, I have tried reinstalling Splunk and switching to "Add Data: Monitor" but the problem remains the same.

Please help me regarding this.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yeah, your index needs a bigger frozenTimePeriodInSecs, by default it's around six years.

Why did a restart of Splunk trigger this? When you add new data, it lives in a hot bucket. Hot buckets are still being written to, and exempt from freezing. Once you restart Splunk all hot buckets roll to warm, and get checked for freezing. Since your data is older than your frozenTimePeriodInSecs Splunk will freeze it.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I edited the frozenTimePeriodInSecs and it worked. Thank you.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I will give you a hint: It has nothing to do with restarting splunk. Other than that, it could be many, many things.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

When you ingest the data and search for it before shutting down splunk, what are the timestamps of your events?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Here is an event:

I also defined a sourcetype so Splunk could parse timestamps:

[FlightData.csv]

BREAK_ONLY_BEFORE_DATE =

CHECK_FOR_HEADER = true

DATETIME_CONFIG =

INDEXED_EXTRACTIONS = csv

KV_MODE = none

LINE_BREAKER = ([\r\n]+)

MAX_DAYS_AGO = -1

NO_BINARY_CHECK = true

SHOULD_LINEMERGE = false

TIMESTAMP_FIELDS = FlightDate,CRSDepTime

TIME_FORMAT = %Y-%m-%d%H%M

category = Structured

description = Comma-separated value format. Set header and other settings in "Delimited Settings"

disabled = false

pulldown_type = true

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Ok, so the data is dated as being from the year 2000...

Unless you have defined a retention period of more than 20 years for your bigdbook index...

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi nickhillspcl,

Thanks for your response. I just checked my index's storage optimization section. The current Tsidx Retention Policy is "Disable Reduction" so I don't think this is the reason causing the problem.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Nope. That’s not the setting. What’s your frozen period.

https://docs.splunk.com/Documentation/Splunk/8.0.2/Indexer/Setaretirementandarchivingpolicy

In particular, this section:

Freeze data when it grows too old

You can use the age of data to determine when a bucket gets rolled to frozen. When the most recent data in a particular bucket reaches the configured age, the entire bucket is rolled.

To specify the age at which data freezes, edit the frozenTimePeriodInSecs attribute in indexes.conf. This attribute specifies the number of seconds to elapse before data gets frozen. The default value is 188697600 seconds, or approximately 6 years. This example configures the indexer to cull old events from its index when they become more than 180 days (15552000 seconds) old:

[main]

frozenTimePeriodInSecs = 15552000

Specify the time in seconds.

Restart the indexer for the new setting to take effect. Depending on how much data there is to process, it can take some time for the indexer to begin to move buckets out of the index to conform to the new policy. You might see high CPU usage during this time.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It worked. Because the data is dated 20 years ago, so to make sure I edited the frozenTimePeriodInSecs attribute in indexes.conf to 662,256,000 (which is 21 years).

Thank you very much, nickhillscpl.