Join the Conversation

- Find Answers

- :

- Apps & Add-ons

- :

- All Apps and Add-ons

- :

- Problems with earliest consultations higher than 7...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

I have a strange problem in my instance of Splunk Enterprise and have not found solution.

Recently migrated to Splunk Enterprise version 6.2.4 to 6.3.0. After that come problems in realizing consuntas superir to 7 days, for example "earliest=-30d@d".

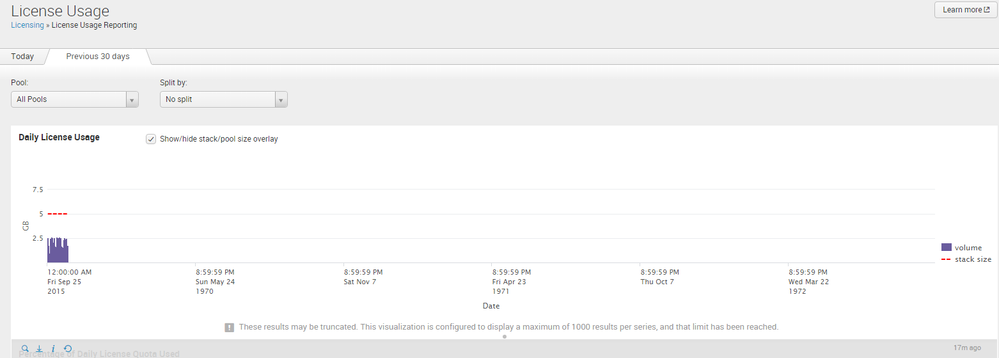

Using the timechart, statistics run from left to right from the earliest to latest time. However, following right time runs to and from the past coming up to September 1972.

This problem occurs with any index, including indexs Splunk example: _INTERNAL.

When I use ranges of 7 days or less, this problem does not occur, example "earliest=-7d@d"

Anyone have any suggestions on how to solve this problem?

Thank you.

Steps Reproduced:

1- index=_internal earliest=-1d@d | timechart count by host - Ok

2 - index=_internal earliest=-7d@d | timechart count by host - Ok

3 - index=_internal earliest=-8d@d | timechart count by host - Invalid value "-8d@d" for time term 'earliest'

4 - index=_internal earliest=-10d@d | timechart count by host - Problems in graph presentation. Appears at the far right of the graph 23 September 1972.

5 - index=_internal earliest=-30d@d | timechart count by host - Problems in graph presentation. Appears at the far right of the graph 23 September 1972.

6 - 5 - index=_internal earliest=-90d@d | timechart count by host - Problems in graph presentation. Appears at the far right of the graph 23 September 1972.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Wow.

1) Can I ask what timezone your Splunk Server is in?

2) Can you also double check what the host thinks the current time is? My only idea is that the rather-complex timezone and daylight-saving-time code is being thrown off WILDLY, possibly contributing to this would be if you were in a rather unusual timezone?

3) Can you also export these results as CSV? I'm curious to see the raw integers of the timestamp values here. If they are actually off as well then, er, even more wow.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Wow.

1) Can I ask what timezone your Splunk Server is in?

2) Can you also double check what the host thinks the current time is? My only idea is that the rather-complex timezone and daylight-saving-time code is being thrown off WILDLY, possibly contributing to this would be if you were in a rather unusual timezone?

3) Can you also export these results as CSV? I'm curious to see the raw integers of the timestamp values here. If they are actually off as well then, er, even more wow.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

Nice. The problem was related to the same timezone. I am in Brazil timezone GMT -03: 00 and entered Daylight Saving Time on October 18, a date that coincided with the migration of the version of Splunk. As Splunk not automatically interpreted the DST Brazil, the ran this problem. I set the timezone my user to GMT -02:00 and funcinou perfectly. Thank you very much for your support. Now I see a way to configure daylight saving time in the timezone GMT -03:00.

Thank you.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Assuming what 6.X does is similar to what 4.X and 5.X did, the UI actually gets from splunkd a json representation of the current timezone's entire olson table, containing all dst offset changes. This gives it the ability to correctly translate any epochtime value to a string-formatted time. However... if the operating system's idea of where timezone offset boundaries are is weird or wrong (or in this case insanely wrong), the potential for havoc is somewhat limitless. Sounds like you found a workaround to either change the timezone or tweak dst handling on the host to make the bug go away.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

brandili,

Great! I'm glad this is resolved, and it looks like sideview was pretty much right on the money.

Do you mind if I rearrange these comments, make sideview's comment the actual answer then Accept it for you?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Likewise, thanks for the cleanup.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Ok Rich. Thanks!

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Another thought or two:

Close out of your browsers. Reboot. Restart browsers, clear cache, restart browser again. What happens? Try a different browser. What happens? I would say if those don't work, try restarting Splunk on the search head involved. Then maybe clearing cache again. 🙂

And I too would like to see a few of the events as raw data. Even if you could look at them and see if the timestamps in the events actually match what you are seeing or not.

My guess is it's something very simple and likely browser or cache (somewhere!) related, but this still makes me want to double sideview's "more wow" into "double plus more wow."