- Apps and Add-ons

- :

- All Apps and Add-ons

- :

- Lower resolution chart when reproducing grafana/pr...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Lower resolution chart when reproducing grafana/prometheus in Splunk with same data

I'm trying to reproduce a collection of Grafana dashboards we have using Splunk. Generally its working okay, but there's this one area I can't get my head around. I seem to get better resolution on the charts with prometheus/Grafana than I see with Splunk.

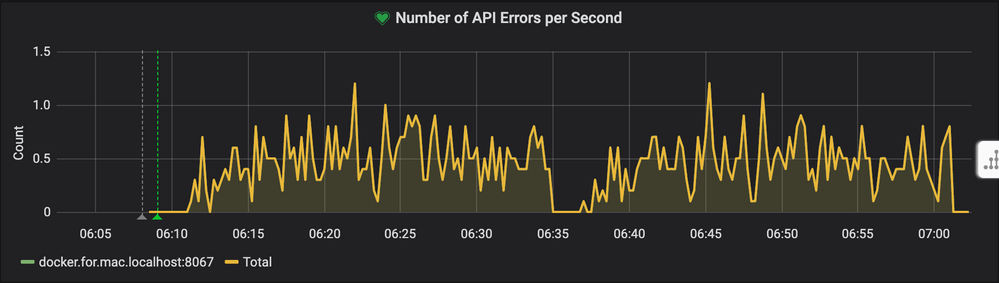

In Grafana I have a chart that looks like this:

Where the prometheus query is:

irate(mattermost_http_errors_total{instance=~"$server"}[1m])

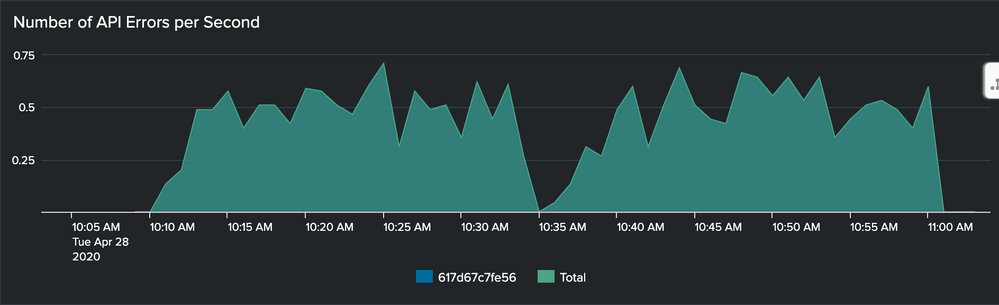

In Splunk my chart looks like this:

And the associated Splunk search is:

| mstats rate(_value) as count prestats=true WHERE metric_name="mattermost_http_errors_total" AND `mattermost_metrics` sourcetype=prometheus:metric AND (host ="617d67c7fe56") span=60s BY host

| timechart rate(_value) as count span=60s BY host

| addtotals

The general structure/trends seem reasonably close, but Grafana seems to have much finer resolution on the data.

- I'm scraping the system-under-test in 15s intervals in both cases.

- Hovering over the datapoints shows me that Grafana is generating a value on the same period as the scraping interval, but Splunk is only generating data on the

spanvalue.

What I suspect: Its as if Grafana is doing a sliding 1 minute window, evaluated at each point in time that it has data. Where Splunk is taking absolute windows/span and generating a single result.

Is there something I'm missing with how to author the mstats searches? Is there some way to achieve a similar result? Am I correct in what I suspect is happening?

Note: My Grafana dashboard also has a series for the "Total" in the same way my Splunk query suggests with addtotals... I just didn't copy that query here