- Splunk Answers

- :

- Using Splunk

- :

- Alerting

- :

- Why is Alert Triggering Delayed and why are there ...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Why is Alert Triggering Delayed and why are there Missing Alerts?

Hi all, hope you can help address a pretty serious concern I'm having.

So I have several scheduled alerts configured on Splunk to run hourly. They run the query every hour checking for the past hour of events. I've also configured them to not Throttle, but to Trigger "Once". It also sends an email on triggering.

I recently checked the query manually in the Splunk search, and found that there were 2 problems.

1) There were several different results across different hours. Basically I had 1 result from 14:00-15:00 and another result from 17:00-18:00. But I had only one massively delayed email received.

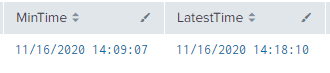

And just look at this trigger time! To clarify, this is for the 14:00 alert! Not the 17:00 one! And then it doesn't even include the 17:00 results!

| Trigger Time: |

| 17:25:48 HKT on November 16, 2020. |

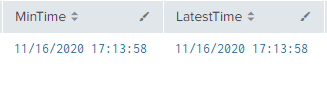

2) I checked the results for an entirely different day and found that I had not received an email about it at all. When I run my query in Splunk search hour by hour, I can definitely see the results, so it's not a problem of my query.

| MinTime | LatestTime |

| 11/18/2020 10:39:32 | 11/18/2020 10:42:25 |

I know that one possible reason my queries are so delayed is because I have a large number of scheduled searches running (like 100+?) and that affects the queueing but is it really this bad?? How can I just have no emails being sent at all??

I'm really at a loss at how to check this further. I've checked my mailbox settings and confirmed that I haven't blocked or junked any of the emails sent by Splunk. I don't know what my next step should be. Can someone please help? Thank you

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@madsmils - Kindly check Splunk's internal logs.

I recently found that I have a large email size issue, there could be many issues. Please check the logs for your alert, scheduler, email, alert action and python related logs.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Did you ever find an answer for this? Dealing with the same issues right now.